From Plato to Gradient Descent: The Frame We Forgot We Chose

We talk endlessly about what AI can and cannot do. We talk very little about the assumptions built into the question itself. This dialogue follows a philosopher and an AI practitioner through twenty-five centuries of choices, Galileo, Newton, Turing, Shannon, Hodgkin and Huxley, each one narrowing what counts as a legitimate description of the world. The practitioner keeps saying: but it works. The philosopher keeps asking: works at what, exactly?

Practitioner: I’ll be honest, I find the "philosophy of AI" a bit indulgent. We aren’t guessing anymore. We’re building systems that work. GPT, AlphaFold, Sora... these aren't accidents. We’ve clearly cracked the code on how intelligence functions.

Philosopher: I don’t doubt the results. But results are seductive; they act as a veil. They make it nearly impossible to see the radical assumptions you had to make just to get them.

Practitioner: What assumptions? We have data, we have a loss function, and we minimize it. The math is the only "assumption" there.

Philosopher: But the math is a choice made centuries ago. Let's look at what you’ve inherited. It starts with Plato and Aristotle. They gave us the "Great Observer" model, the idea that the world is a finished object sitting "out there," and our job is to map it. But Aristotle at least kept things "thick." He believed in Final Causes, that you couldn't understand a seed without the purpose of the tree it becomes.

Practitioner: But purpose isn't "tractable." You can't put "purpose" into a Python script.

Philosopher: Exactly. And that’s where the pruning began. Galileo didn't just discover that nature was mathematical; he decided it was. He took the "lived world", the smell of a rose, the sting of a bee, and called them "secondary qualities." He threw them out because they couldn't be measured. He left us with a skeleton and told us it was the whole person.

Practitioner: But that skeleton allowed Newton and Leibniz to give us the Calculus. We finally got a language for change!

Philosopher: A specific kind of change. By turning time into a variable, dt, they spatialized it. They turned the flow of life into a series of frozen snapshots on a coordinate grid. Bergson warned us about this. He said "Duration" is a melody, but science treats it like a sheet of music where every note happens at once.

Practitioner: Look, I use Transformers. They handle "sequences" perfectly.

Philosopher: Do they? A Transformer doesn't experience "before" or "after." It uses positional encodings, literally turning time into a set of spatial coordinates so it can process the whole string simultaneously. It has no "now." It’s exactly what Bergson feared: you’ve killed time and called the corpse a "sequence."

Practitioner: (Frowning) Okay, that’s a fair hit. But what about the mind?

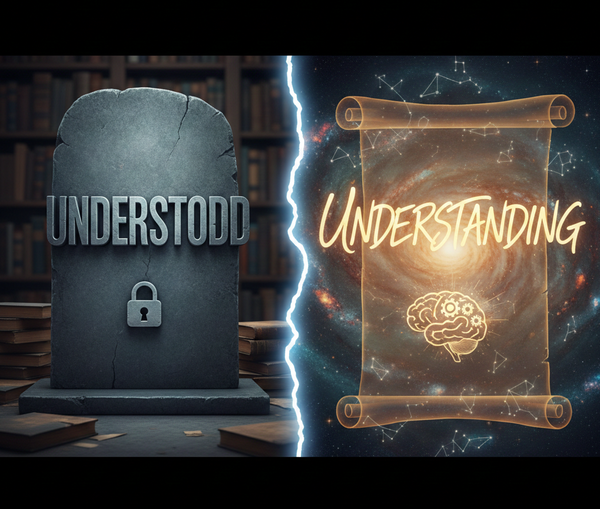

Philosopher: That’s where Turing and Shannon finished the job. Turing made a modest mathematical claim. He asked: can we give a precise, formal definition of computation? And he showed that any effectively calculable function can be computed by a sufficiently simple machine. This was a mathematical result. But it was immediately inflated into an ontological claim: everything that matters about intelligence can be expressed as computation. Then Shannon arrived and turned "Information" into a physical quantity, something independent of meaning. You can ask how many bits the visual cortex processes per second. The question would have been meaningless before Shannon.

Practitioner: Information is physical. We measure it in bits.

Philosopher: Only because Shannon redefined it that way! Before 1948, the idea that a neuron firing and a transistor switching were "the same thing" would have been nonsensical. But once Hodgkin and Huxley modeled the neuron as a circuit of differential equations, the deal was sealed. We didn't just find a model for the brain; we decided the brain was the model.

Practitioner: (Pauses) So you’re saying that when I run Gradient Descent, I’m not just optimizing a network... I’m performing a 300-year-old ritual?

Philosopher: Precisely. Gradient Descent is the ultimate triumph of the Leibnizian dream. You’ve assumed that "Understanding" is just a point on a high-dimensional mathematical surface. You’ve assumed that if you can predict the next token accurately enough, the "meaning" is irrelevant. You are standing at the end of a long hallway where every window to the "lived experience" has been boarded up, one by one, in the past 2,500 years!

Practitioner: But the results... they’re so close to human. If a model behaves like it understands, does the "lived experience" even matter?

Philosopher: Kant would say the model only "works" because you are the one interpreting it through your own human categories. Husserl would say you’ve mistaken the map for the territory. The danger isn't that AI will fail; the danger is that we will succeed in defining "Humanity" down to the level of the machines we’ve built.

Practitioner: So what do I do? Stop coding?

Philosopher: No. Keep building. But develop "Assumption Literacy." See the frame. When you say "The model knows," remember that "knows" is being used in a very narrow, pruned, 2,500-year-old sense. The next breakthrough won't come from more data; it will come from someone who realizes they’ve been standing in a room they forgot had a door.

Practitioner: So, the "cage" isn't the code. The cage is the very belief that the world is a set of data points waiting to be scraped. We’ve built a prison out of "objectivity."

Philosopher: Exactly. We have spent centuries perfecting the art of the spectator. We imagine ourselves standing behind a glass pane, describing the "objects" on the other side. By the time that information reaches your model, it has been stripped of its context, its "thickness," and its vitality. It’s been pre-processed by 2,500 years of ontological pruning.

Practitioner: And my entire field is based on the idea that if I just make the glass pane clearer, more parameters, more compute, I’ll eventually see the "Truth." But you're saying the glass itself is the problem.

Philosopher: Yes. What if the "world" isn't an external objective thing to be mapped, but a horizon of possibilities created through action? Think of a blind man with a cane. Where does "he" end and the "world" begin? Is the "data" at his fingertips, or at the tip of the cane?

Practitioner: It’s the interaction. The "meaning" of the sidewalk only exists because he’s trying to walk on it.

Philosopher: Precisely. If you want to break out of the cage, you have to move from Representation to Enaction. Your models are currently "brains in a vat," fed a diet of frozen symbols. They are passive digesting dead history. To truly "crack the code" of intelligence, we have to stop trying to describe a finished universe and start building systems that have to care about their survival within a medium.

Practitioner: So, intelligence isn't a "loss function" minimized on a static dataset. It's the ability of a living system to "bring forth" a world through its own needs and movements.

Philosopher: That is the "door" I spoke of. The moment you stop seeing the mind as a mirror of nature and start seeing it as a participant in nature, the 300-year-old ritual of the "Great Observer" ends. You stop building better cages and start building something that can actually breathe.